Fake Sex Video Scandals: The Next Political Weapon

“Sex, Lies, and Videotape”just took on whole new meaning.

Imagine being a political candidate, a celebrity, or even Average Joe on the street, when accusations of a “sex scandal” erupt. Immediately, headlines crucify you. Despite your adamant denials insisting the story is false, a full-length video is posted online contradicting those denials. Then, the media pounces even harder, the online trolls have their fun, and your entire life’s work is destroyed.

But in reality, the video is a fake. Devastating thought, isn’t it?

This is not a rewind of the Kavanaugh hearings. It’s merely an extension known as deep-fake. Just like airbrushing and PhotoShop made pictures easy to manipulate, digital technology is making the video medium just as vulnerable.

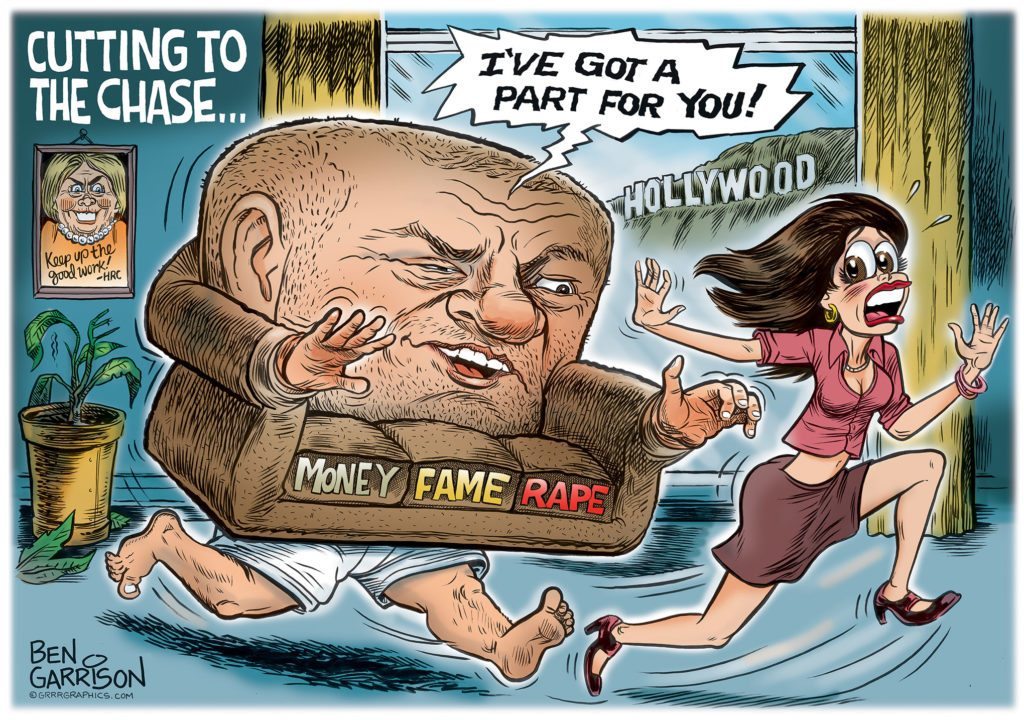

What we’re left with is an inability to decipher fact from fiction as anyone can be made to look like Harvey Weinstein.

The Washington Post elaborates:

But the videos have also been weaponized disproportionately against women, representing a new and degrading means of humiliation, harassment and abuse. The fakes are explicitly detailed, posted on popular porn sites and increasingly challenging to detect. And although their legality hasn’t been tested in court, experts say they may be protected by the First Amendment — even though they might also qualify as defamation, identity theft or fraud.

Videos have for decades served as a benchmark for authenticity, offering a clear distinction from photos that could be easily distorted. Fake video, for everyone except high-level artists and film studios, has always been too technically complicated to get right.

But recent breakthroughs in machine-learning technology, employed by creators racing to refine and perfect their fakes, have made fake-video creation more accessible than ever. All that’s needed to make a persuasive mimicry within a matter of hours is a computer and a robust collection of photos, such as those posted by the millions onto social media every day.

The videos range widely in quality, and many are glitchy or obvious cons. But deep-fake creators say the technology is improving rapidly and see no limit to whom they can impersonate.

It doesn’t require much imagination to think of what kind of mayhem this technology aides. The political dirty-tricks are headed for a new level.

First Amendment v. Privacy Rights

Some argue First Amendment protections trump all. But tell that to Jennifer Lawrence, Kate Upton, Kirsten Dunst, and over 100 other celebrities who had their private accounts hacked and posted online.

Fox News covered the aftermath:

Federal prosecutors say 26-year-old George Garofano, of North Branford, pleaded guilty Wednesday to unauthorized access to a protected computer to obtain information.

The charge stemmed from the investigation into the 2014 scandal in which the private photos of Jennifer Lawrence, Kirsten Dunst, Kate Upton and others were made public.

Prosecutors say Garofano sent emails that appeared to be from Apple encouraging victims to disclose usernames and passwords. He then used the information to illegally access nearly 250 iCloud accounts.

Garofano, who remains free on $50,000 bond, faces up to five years in prison at sentencing at a date to be determined.

Garofano got only 8 months in jail.

As for personal privacy rights, the San Francisco Chronicle explored the controversy in 2003:

Celebrities have an undeniable right to prevent their images from being used on mugs or merchandise. But legal experts say the Winter brothers‘ case seeks to extend that right into new territory: the traditionally protected world of satire, fiction and entertainment.

The dispute highlights one of the hottest legal issues in the entertainment industry. The merchandising of celebrity images has become a booming industry for actors and athletes whose name or face is worth a fortune in advertising. In recent years, Tiger Woods, Dustin Hoffman, Joe Montana and other celebrities have ended up in legal squabbles with artists, illustrators and publishers over control of their multimillion-dollar images.

Courts generally have protected the First Amendment rights of artists and their interpretations of celebrity images. But, to the alarm of the entertainment industry, that trend may be turning.

Since the early 1900s, famous people have enjoyed a “right to publicity” — meaning the right to control the revenues generated from their name or face. The laws were intended to prevent others from capitalizing on a celebrity’s fame on baseball cards or in advertisements and endorsements.

The California Supreme Court shifted the legal landscape in 2001 by ruling that a Southern California artist was barred from selling T-shirts and lithographs with the images of the Three Stooges.

Setting a new standard, the justices said the work must be more than a mere likeness and somehow “transform” the celebrity’s image by adding enough of the artist’s “significant creative elements.”

The Winter Brothers sued DC Comics when their images were illegally used as comic book villains.

The California State Supreme Court ruled in favor of DC Comics on First Amendment grounds.

But Is Deep-fake Legal? Possibly!

Wired has pointed-out:

It’s a new frontier for nonconsensual pornography and fake news alike. (Doctored videos of political candidates saying outlandish things in 3, 2… .) And worst of all? If you live in the United States and someone does this with your face, the law can’t really help you.

Face-swap porn may be deeply, personally humiliating for the people whose likeness is used, but it’s technically not a privacy issue. That’s because, unlike a nude photo filched from the cloud, this kind of material is bogus. You can’t sue someone for exposing the intimate details of your life when it’s not your life they’re exposing.

In case after case, the First Amendment has protected spoofs and caricatures and parodies and satire. (This is why porn has a long history of titles like Everybody Does Raymond and Buffy the Vampire Layer.) According to Citron, claiming that face-swap porn is parody isn’t the strongest legal argument—it’s clearly exploitative—but that’s not going to stop people from muddying the legal waters with it.

For the average citizen, your best hope is anti-defamation law.

However, the Yale Law Review adds:

This phenomenon is not entirely new; rather, it is just the latest iteration of the old problem of fake pictures and videos, albeit with a new name, using the latest technology. Fortunately, existing law already provides the tools to address deepfakes; thus new legislation is not needed. Not only does existing law adequately address this problem, but further legislating to offer a solution when several are already available risks encroaching on forms of creative expression that do not involve pornography, and are in fact protected by the First Amendment.

Existing New York and federal law provides the means to hold the purveyors of deepfakes and similar pornographic works legally accountable. There is thus no need to legislate further in this area—and doing so would risk infringement on the well-established constitutional protections afforded to editorial comment and creative expression.

Santa Clara University School of Law Professor Eric Goldman agrees with these assessments:

The answer is complicated.

The best way to get a pornographic face-swapped photo or video taken down is for the victim to claim either defamation or copyright, but neither provides a guaranteed path of success.

Although there are many laws that could apply, there is no single law that covers the creation of fake pornographic videos — and there are no legal remedies that fully ameliorate the damage that deepfakes can cause.